The Gap Your Team Can See (and You Probably Can't)

I was attempting to build real AI capability with my team, and I was driving the effort with everything I had. I arranged for training, gave the team time to do some research and experimentation that would turn into future projects, but I wasn’t showing them that I was doing it too.

From their perspective I was just managing it and saying “do this”. And after a few months, when I asked the team how they were really using AI, their answer surprised me: they weren’t confident. They could see that I thought it was important, but they couldn’t see that I was going through the same process of learning, discovering and making mistakes.

The LinkedIn article published alongside Episode 104 of the Digital Velocity Podcast was about why you can’t delegate participation.

This is about how to measure that gap and close it. Three approaches that don’t require a training program, a new tool, or a six-month change initiative. Just honest reflection, some honest sharing, and one small ask.

What Your Team Thinks

The Signal Audit

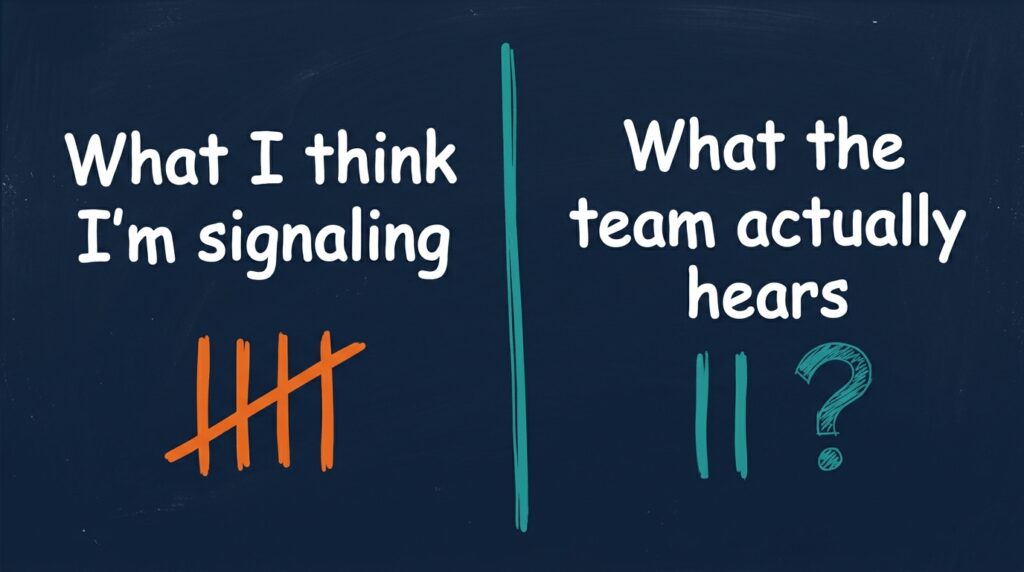

Here is what I know from talking to dozens of owners: most owners rate themselves 8 or 9 out of 10 on the priority they place on using AI in their work, but when you ask them how their teams are using it they would give their teams a 4 or 5. That delta is a participation gap and a signal that something is missing in the process. There may be multiple reasons for that, so here’s one way to find out:

Send your team a simple survey:

Question 1:

“On a scale of 1 to 5, how important does our leadership think AI adoption is?”

1 = Not at all and 5 = They are all in

Question 2:

“On a scale of 1 to 5, how does our leadership think they demonstrate their commitment to AI Adoption within the organization?”

1 = They aren’t doing anything at all and 5 = They are doing everything they can

Question 3:

“On a scale of 1 to 5, do you feel supported by our leadership in AI Adoption initiatives?”

1 = Not at all and 5 = Completely supported

On the first two questions, don’t ask them what they think. Ask them what they think you think. The 3rd question is the part that will create some tension because it will signal the difference between what they think you think and how they feel.

Why does this matter? Because when your team doesn’t see your participation and commitment, they feel isolated and alone and the team doesn’t know if it’s actually important or if this is the “flavor of the month” . They have jobs to do and they have deliverables and they don’t have confidence that AI will make their jobs easier. If the signal from the top is ambiguous, then their participation becomes optional, which means it becomes delayed and means it doesn’t happen.

This is where organizations get it wrong. When they see the gap, they commission a training program and they buy a subscription, and those things have their place, but they are solving the wrong problem. The problem is not knowledge, it’s the confidence that they will be supported and that the effort will lead to a better result. And that confidence only builds when people see that the people asking them to invest their time are also investing their time and learning and failing.

Permission to be Bad

The Visible Failure Archive

I spent years coaching fastpitch softball. One thing that became obvious real fast: you can’t get better at anything without doing the reps. And the early reps are going to be ugly. The first time a young player takes a swing at a rise ball, she is going to miss by a lot. The thing that stops the learning is when a coach makes her feel bad about missing. The thing that accelerates it is when the coach normalizes the miss and sends her back to the box for rep two.

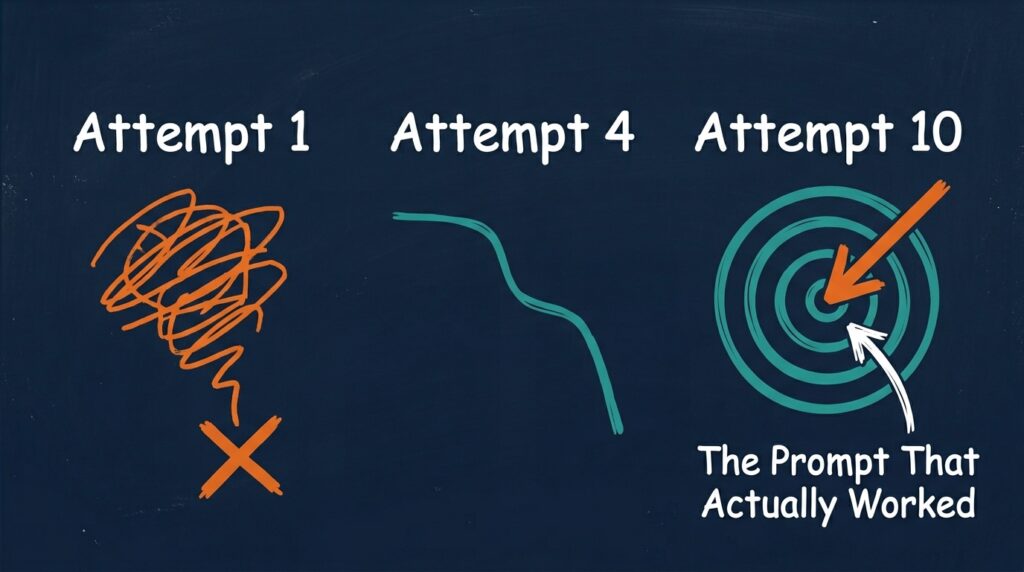

AI adoption works the same way. You and your team need to do more reps. The first prompt you use on any task for the first time is going to be rough and the output is likely going to miss the mark. This is not a problem because this is the basis of learning.

But if the only thing your team sees from leadership is polished outputs, they think polished is where you start even though they know that is not reality. They think they need to be good immediately and they aren’t confident that they can do that, so they don’t try at all.

The antidote is the Visible Failure Archive. Start a shared document or a slack channel or a short team meeting. Whatever works best for your team and try this:

Have everyone post ( or show) their worst AI attempt alongside what they learned. Don’t filter, don’t curate, but show iteration #1 alongside the 10th iteration so everyone on the team can see the failed prompt alongside the one that worked.

Then show the output nobody used alongside the one that saved someone six hours and share the amount of development time that it took to get it there.

This builds confidence because it proves that good output comes from bad output. It normalizes the learning curve and most importantly, when a leader posts their own failure first, they are signaling:

I am in this with you.

I don’t know how to do this yet either.

We are learning together.

That signal is worth more than any training video or tool recommendation.

I have seen this work repeatedly in my own work. I (and my team) start hesitantly. But when I post a bad prompt with a note: “This was terrible, but here is what I learned about prompt specificity.” Suddenly three other people post their attempts. Two weeks in, the archive is full. The team starts to iterate and try new things and their confidence builds. And by month two, the person who was most nervous about AI is the one showing up with new use cases. The only thing that changed was visibility.

The Smallest Ask that Sends the Biggest Signal

The 15-Minute Takeover

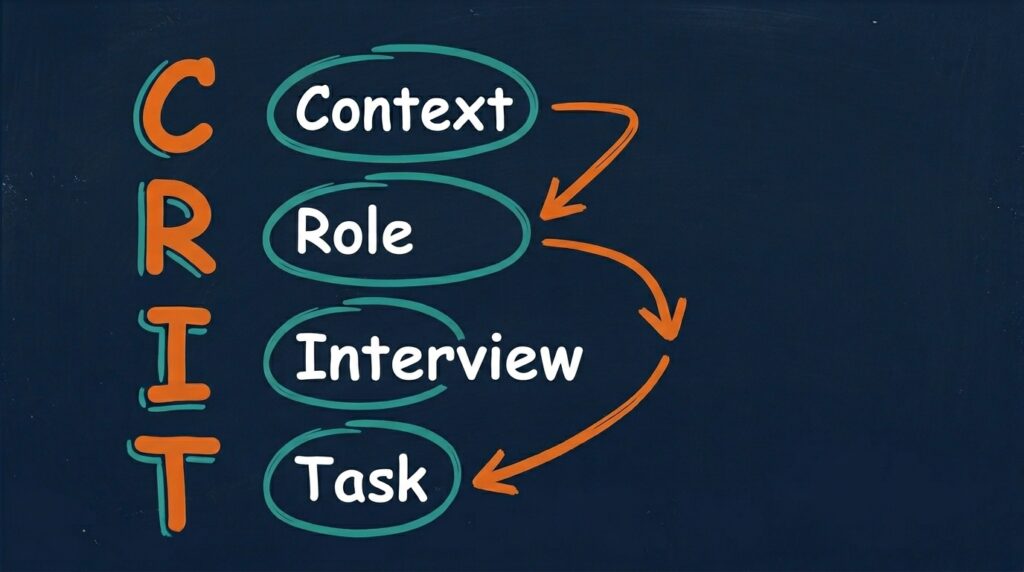

Here is one thing that you can do in 15 minutes that starts the journey to solving a real problem. Use the CRIT prompt framework with your team. I learned from Geoff Woods at the MAICON conference in October 2025.

C = Context (describe the problem you are trying to solve in as much detail as you can)

R = Role (define the expertise you are looking for – marketing expert, research expert, creative strategist, etc.)

I = Interview (ask me questions, one at a time to gain additional context and understanding)

T = Task (after you do the interview step let the AI churn on your problem and offer some initial solutions and then ask it to provide you with “3 non-obvious solutions to solve [the problem]”)

Here is how you run it.

Pick a real problem your team knows. It can be a process bottleneck, a client question that comes up repeatedly, an internal research need, anything.

Bring the team together. Open your AI tool of choice. Read them this prompt:

You are an [expert consultant with deep expertise in XXXXXXXXX] brought in to solve this problem: [INSERT PROBLEM]. Here is what you know about our situation: [INSERT 2-3 SENTENCE CONTEXT]. Interview us. Ask clarifying questions one at a time to understand the root cause, constraints, and desired outcome. After we answer your three questions, give us your top three recommendations with reasoning.

Run through the interview and let the AI ask the questions. Let your team answer them and then watch as the AI gives you three thoughtful recommendations for a problem you have been circling for months.After the AI gives you the recommendations and you have evaluated them, then ask for the “3 non-obvious solutions to solve [the problem]”.

Something lands. Usually more than one person says, “Oh, we didn’t think of that.” Boom. Participation just happened. Without anyone getting trained. Without anyone buying anything.

This changes everything for how you talk about AI adoption at the operator level. Stop asking your team to “support AI adoption” and ask this instead: “Can you give me fifteen minutes in our next meeting to solve one problem?” That is specific rep that anybody on your team can do over-and-over again.

And when you run it and your team sees real solutions to a real problem, something happens internally. They realize AI is not some future state thing, it is something they can use everyday on every part of their job.

The Gap and the Antidote

Pat Barry and I opened Episode 104 with a simple truth: Nobody starts confident. Confidence is built through participation and participation is not built through delegation. It is built through visibility and the willingness of leaders to be bad in front of their teams.

The three approaches we talked about are small actions that maximize your signal to the team that this matters and I can demonstrate how.

One short survey to expose the gap.

One shared document of the messy journey to success.

One meeting where the team can experience your participation and committment

When you do those three things, your team builds confidence and buys in.